Your AI agent went live. Now the real work starts.

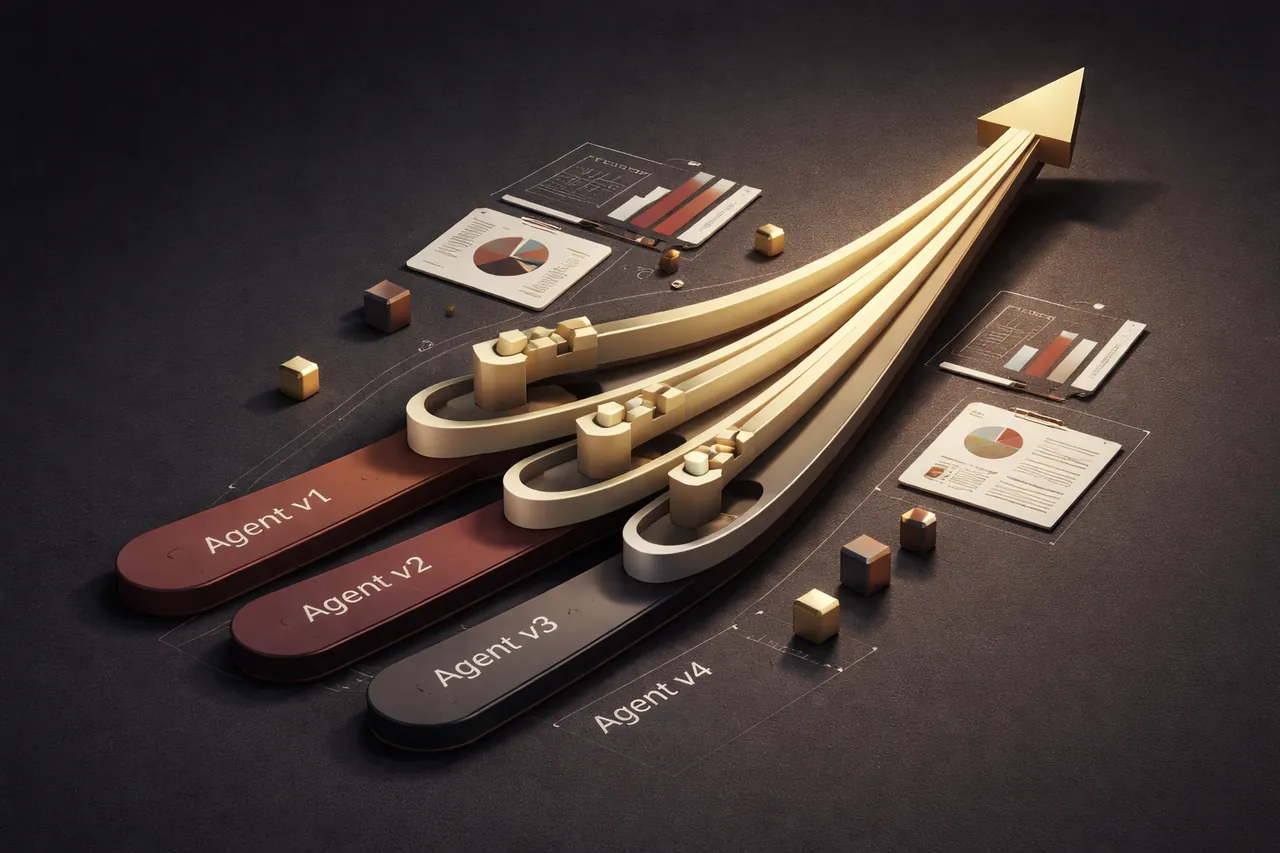

Agents don't improve on their own. A structured iteration loop (measure, test, refine) is what separates a v1 that stagnates from one that gets meaningfully better over time.

Most AI projects follow the same arc. A team spends weeks scoping, building, and testing an agent, ships it, and moves on to the next project. The agent keeps running in production, and nobody touches it.

Slowly, it starts to underperform. The business shifts, customer queries evolve, the underlying model gets a silent update. Prompts that worked well in March are producing mediocre outputs by June, and by the time anyone notices, there’s often no clean way to improve things without risking something else. This is the deployment trap, and it’s more common than most teams would like to admit.

After the launch

Companies budget carefully for building: design, engineering, testing, rollout. Those costs are visible and easy to put in a project plan.

What typically gets left out is the ongoing work that follows: measuring whether the agent is still doing what it was built to do, testing whether it could do it better, and making confident changes without guessing. AI agents need feedback and ongoing calibration in a way that standard software features don’t.

“Without [evaluations], it’s easy to get stuck in reactive loops — catching issues only in production, where fixing one failure creates others.” — Anthropic Engineering, Demystifying Evals for AI Agents

In most deployments we’ve reviewed, the gap between launch performance and what systematic iteration could produce is somewhere between 20 and 40% on measurable quality metrics (conversion rate, task completion, accuracy, depending on the use case). That gap tends to come from an absent feedback loop rather than poor initial work. The engineers who built the agent made dozens of small decisions about prompting, framing, and output structure. Some were right, some were slightly off, and there was no clean way to tell which was which at the time. Without a structured way to test and measure, those slightly-off decisions stay baked in indefinitely.

Running the loop

The process follows a clear pattern once the infrastructure is in place. You start with a baseline agent and one measurable outcome: say, the percentage of users who take the next step you want them to take. You run the agent, measure that number, and establish a baseline. Call it 31%.

Then you form a hypothesis. Maybe the agent’s opening message is too generic. You write three variations, split traffic across all four versions, and run until the results reach statistical significance. One version pulls 36%, so you make it the new baseline and archive the others.

Next week, another hypothesis and another test. Each improvement is modest in isolation, but stack six months of them and the agent is performing 50% better than the one you launched, with a clear record of which changes drove each gain.

Stack six months of modest improvements and the agent is performing 50% better than the one you launched, with a clear record of which changes drove each gain.

Building this loop from scratch (versioning, sandboxing, measurement, attribution) takes most teams several months of engineering work, and most teams have other priorities. So the agent stays at version one.

The infrastructure you’d otherwise have to build

We build the infrastructure for this process. Teams use our platform to define their agents, run isolated test variants, and measure outcomes without having to engineer the loop themselves. What typically takes months to build takes hours to configure.

The agent you have six months from now should be materially better than the one you launched today, because you ran it through a disciplined improvement process.

A few questions worth asking if you have an agent running in production:

What metric tells you the agent is working? If the answer isn’t precise, defining it is the right first step before measuring anything else.

How would you know if the agent got 10% worse tomorrow? Waiting for complaints is a monitoring strategy, but a slow one. Performance can erode steadily for weeks before users start escalating.

When did you last update the agent’s prompts or configuration? If it’s been more than a month and the agent is handling real queries, something has almost certainly shifted since you last looked.

We’ll be writing regularly about what we’re learning and what the data shows. If you’re thinking through any of this for your own business, we’d be glad to talk.

— The Levain Labs team

Sources

- Anthropic Engineering: Demystifying Evals for AI Agents

2025. "Without [evaluations], it's easy to get stuck in reactive loops — catching issues only in production, where fixing one failure creates others."

- AWS: Evaluating AI Agents — Real-World Lessons from Building Agentic Systems at Amazon

February 2026. "Production evaluation monitors real-world performance across diverse user behaviors, usage patterns, and edge cases not represented before production deployment to identify performance degradation over time."

- Pan, M.Z. et al. (UC Berkeley): Measuring Agents in Production

arXiv:2512.04123, December 2025. Survey of 306 AI practitioners: 75% of production teams evaluate agents without formal benchmark sets, relying instead on A/B testing, user feedback, and production monitoring.

- PwC: AI Agent Survey

May 2025. 308 US executives surveyed: 79% report AI agents already adopted in their companies, but broad adoption does not consistently translate to measurable impact.